|

| Photobucket |

That statement above seems clear and explicit enough to be contradicted very easily if the science of catastrophic anthropogenic global warming is truly settled. But it won't be contradicted because it can't be: there are no observations of temperatures, of storms, of ice, or sea level, etc etc that show cause for alarm over anything extraordinary happening. [The quote comes from a recently published report, linked to below, from climate experts in Australia (hat-tip Greenie Watch).]

Given that observations are not there to show dangerous global warming is occurring, what is causing all the fuss?

All we have are computer models programmed to give each additional bit of CO2 a warming contribution at the surface of the Earth, and theory which says that that by itself should produce effects that we would have the greatest difficulty in discerning against the many other sources of variation in the climate system.

For example, a doubling of CO2 levels might produce no more than 1C increase in the computed global mean temperature, and quite plausibly 0.5C or less. No cause for alarm there. In fact, such a modest warming would be overwhelmingly beneficial given what we know of relatively warm spells in the past such as the Medieval Warm Period and the Roman Climate Optimum.

Now such good news, funnily enough, does not bring with it a need for increased state power, nor more power for the UN, nor more funds for what used to be primarily wildlife conservation or humanitarian organisations, nor more clients for crisis consultancies, nor more platforms for political extremists bent on destruction.

Imagine that! No starvation caused by using farmland to make fuel for vehicles instead of food for people, no scary pictures of flooded cities to scare us, no children being told that polar bears will die unless they make their parents turn down the heating, no crippling of industrial development by discouraging conventional power stations around the world, no energy cost increases due to subsidies for renewables and no damaging of the environment to make way for them, make them, and live with them. And no more jobsworths and consultants going on and on about that bizarre notion, the carbon footprint..

So, given that the actual weather, ice, and sea-level records show nothing extraordinary about the last 50 years or so, and given that the basic theory is for a modest increase in temperatures due to more CO2, where is the problem?

It lies inside those computer models. They display a positive feedback which amplifies the effect of the CO2 to produce far larger temperature increases. Far larger than have been observed so far - not least since we have seen no overall increase at all over the last 10 to 15 years, let alone any rising trend in line with model projections.

These models have some practical value in extrapolating from and interpolating amongst observations of existing weather systems over a few days, not least because they can be frequently re-adjusted as new observations come in. That is weather. On climate, they have had no practical value whatsoever, and may be so misleading that we'd actually be better off without them.

Even their owners and operators admit they are not fit for predictive purposes, and can only be used to provide illustrations of what might possibly happen under various assumptions. Illustrations which have failed to reproduce important features of past climates when used retrospectively. They perform poorly on temperatures, and worse on everything else such as precipitation. You would not want to bet your shirt button on them, let alone your entire way of life.

But let us go back to Australia, and this recent report (pdf) by Bob Carter, David Evans, Stewart Franks & William Kininmonth published by Quadrant Online. It is entitled

What's wrong with the science?

They wrote it in respose to some recent reports by government agencies, apparently engaging in a PR campaign to try to increase public alarm over climate. They write:

Is there any substantive new science in the science agency reports? No.

Is there any merit in the arguments for dangerous warming that are advanced in the reports? No.

Did any mainstream media organisation question the recommendations in the reports in their mainline news reporting? No.

Was there any need for, or purpose served, by the reports? Yes, but only the political one of attempting to give credence to the impending collection of carbon dioxide tax.

They have a government down there whose lies over a carbon tax have been widely exposed, and whose decisions linked to alarmist projections about droughts and rising sea levels have been widely resented for the diversion of resources to build desalination plants and otherwise mismanage water resources. They have also had green-inspired fiascos over home insulation, and bushfires, both of which also led to tragedies. A recent election in Queensland saw votes for the party in power plummet very dramatically indeed, and since they seem suicidally committed to the green dogma over climate, they would surely grasp at any PR straws that were presented to them.

And straw is all they got. See the report for more details of this, but also for a useful overview of the case against alarm over airborne CO2 as the authors shred, point by point, the shoddy claims being pushed by their government's agencies. They deal with temperatures, precipitation, sea levels, greenhouse gases, and ocean heat content. For example, on the last topic, they show this plot:

Note the divergence between models and reality - a very common leitmotif in climate alarmism.

How do teachers cope with this divergence? What do you say about the scare stories in the textbooks and websites targeting children about climate change, while news comes in of polar bears and penguins doing very well, of glaciers not disappearing on request, of snows also failing to be a thing of the past, of hurricanes not becoming more frequent or intense, of sea level rises not accelerating, and of the great iceaps and sea ice doing nothing untoward, and of course, of temperatures behaving just exactly as if the additional CO2 of the past 15 years has had no discernible effect on them?

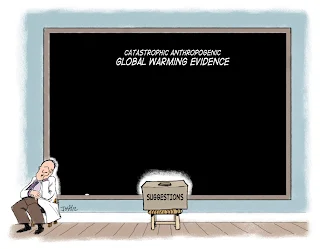

Note added 2nd May 2012: Josh (http://cartoonsbyjosh.com) captures it:

For some background, see: http://www.bishop-hill.net/blog/2012/5/2/cartoon-the-cartoon-josh-164.html

Note added 2nd May 2012: Josh (http://cartoonsbyjosh.com) captures it:

For some background, see: http://www.bishop-hill.net/blog/2012/5/2/cartoon-the-cartoon-josh-164.html